Introduction

The project design sets out a programme for investigating preservation (storage methods), reuse (usability) and dissemination (delivery mechanism) strategies for exceptionally large data files generated by archaeologists, researchers and cultural resource managers undertaking fieldwork and other research.

The data in question is typified by large formats that have exceptionally large file sizes and in particular the technologies associated with their storage and delivery. The generation and use of such data for research is increasing in specific fields of archaeological and cultural resource management activity (maritime archaeology and surveying, laser scanning, LiDAR, computer modelling and other scientific research applications). Yet there is little understanding of the implications for cost and good practice in data preservation, dissemination, reuse and access. This lack of understanding is potentially exacerbated by the proprietary nature of formats generally used by the new research technologies now being used in archaeology and cultural resource management.

The project seeks to answer immediate questions regarding cost and to develop recommendations and strategies for archaeologists, researchers, cultural resource managers and archivists dealing with ‘Big Data’.

The project recognises that computing capacity, both to create and to archive data, will continue to rise. The aims of the project consequently address generic and strategic issues as well as the immediate questions posed by ‘Big Data’ today.

The final report of the Big Data Project is now available for download – PDF (845 KB)

Overview

Aims of the ‘Big Data’ Project:

Preliminary and Ongoing Investigations:

- Define ‘Big Data’ and the technologies, delivery mechanisms, storage methods and activities in archaeology, research and cultural resource management currently generating such data.

- Identify and review during the period of the project the circumstances under which we deploy the technologies that generate ‘Big Data’ formats.

- Identify, describe and characterise the data formats used in ‘Big Data’ projects and their relationship to storage, delivery and reusability.

- Identify a representative list of creators and users of ‘Big Data’.

- Identify existing good practice in related fields. As this is an exploratory project for archaeology, research and cultural resource management this will necessarily include a review of policies and services offered in other related scientific fields e.g. National eScience Centre, Nature and Environment Research Council (NERC), Council for the Central Laboratory of the Research Councils (CCLRC), British Oceanographic Data Centre (BODC), British Atmospheric Data Centre (BADC), US Geological Survey (USGS) or even the European Space Agency (ESA), National Aeronautics and Space Administration (NASA) National Geophysical Data Centre at Boulder, Colorado, USA or Conseil Européen pour la Recherche Nucléaire (CERN). The petroleum exploration industry also generates considerable holdings of 3D seismic data for the North Sea. Maritime Archaeology has close and important links with Defra that will also need to be consulted. In particular Defra’s appointed Marine ALSF Science Co-ordinator Dr Richard Newell and the new Marine Data & Information Partnership.

- Determine existing suitable repositories for ‘Big Data’ under a distributed archiving model. This would allow for the ADS to act as a pointer to archives held elsewhere. The AHRB project outlined below for example will be archived at the AHDS repository, while other project data may be archived at other suitable sites.

- To identify best practice in terms of cost for the storage of ‘Big Data’ in its raw and unprocessed form. This will include best formats for storage (e.g. ASCII) and best policies regarding file processing prior to archiving (e.g. Compression).

To Carry out a User Survey:

- Identify and make recommendations on existing standards amongst users in archaeology, research and cultural resource management and current good practice in the generation use, delivery, preservation and storage of ‘Big Data’.

- Identify and make recommendations on the potential uses and reuses of such ‘Big Data’.

- To identify the amount of likely re-use of the various elements of ‘Big Data’ sets and any appropriate time periods for which they remain valid.

- Address issues regarding archiving discard policies and data selection policies.

- Address issues raised by the use of proprietary formats.

- To assess potential future developments in the costs of storage and the implications for future policy.

To Carry out Preservation and Data Access work on Suitable Pilot Studies:

- Investigate, set out and make recommendations on the preservation and storage options available for ‘Big Data’.

- Identify, describe and characterise the required documentation for the preservation and reuse of ‘Big Data’ formats.

- Identify, describe and characterise the interpretive processes applied to ‘Big Data’ and relate these to the documentation issues.

- Identify preservation (storage), dissemination (delivery) and reusability options and costs. Including specifically to:

- Identify issues related to on line dissemination and make recommendations for data dissemination and delivery of data.

- Identify issues regarding proprietary formats and make recommendations regarding their effects on storage, delivery and reuse.

- Investigate and refine an appraisal mechanism for assessing large-scale digital archives in the light of archiving discard policies.

- To assess the potential for likely re-use of the different types of data as an indicator of archive suitability.

- Explore cost models for setting threshold limits on archive sizes or perhaps how long they need to be kept if not accessed at all.

Project Dissemination Aims:

- To encourage and review debate in the user community.

- To disseminate the results of the project through conference papers or a conference session.

- To produce a ‘Big Data’ report.

- The above report and discussion will address generic and strategic issues regarding the archiving and reuse of ‘Big Data’ with the future in mind.

- The project will have its own website hosted by the ADS where interested parties can access information and findings related to ‘Big Data’. This website will link to the Heritage3D site and will complement rather than duplicate the information available there.

Case Studies

Three pilot projects generating ‘Big Data’ have been identified. The process of archiving these projects will allow the assessment of preservation, archiving, dissemination and reuse issues associated with large datasets. These projects cover three of the most common sources of ‘Big Data’ currently produced by archaeologists; maritime archaeology, 3D scanning and LiDAR surveys.

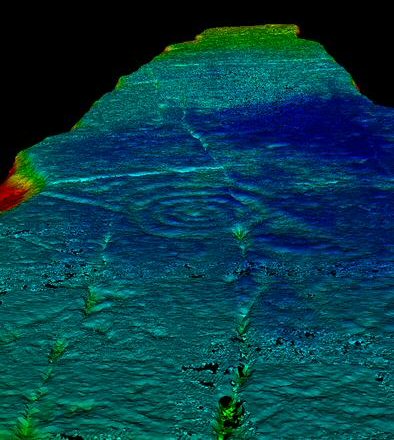

Wessex Archaeology: have been successful in receiving ALSF funds via EH for the Wrecks on the Seabed Project round 1, the project will contain data relating to the investigation of submerged archaeological sites using geophysical tools and diver based techniques. Wessex Archaeology are already in discussion with the ADS regarding costings for maritime projects and a suitable pilot will be selected for the ‘Big Data’ project.

Durham University: have an AHRB funded project called Breaking through rock art recording: three-dimensional laser scanning of megalithic rock art. They have a full range of scanning data, having scanned all the stones of Castlerigg looking for rock art. Sample data has already been sent to the ADS and an assessment is being drawn up for archival purposes. This project should already have budgeted for data documentation and archive preparation. View this archive online.

English Heritage: have a few (so far) projects using LiDAR. The most suitable of these is the ‘Where Rivers Meet‘ project (ALSF 3349). Most data from projects using LiDAR are held by the Environment Agency who will need to be consulted on archiving issues. It is possible that under the distributed archiving model that archiving will ultimately take place at the Environment Agency.

Audits

A series of forms have been designed in order to document in detail the archival process for these projects both in terms of human and physical resources required and of the suitability of the data for dissemination and long term curation.

Questionnaire

Download the Big Data Questionnaire results

Formats

Download the Big Data Review of nature of technologies and formats

Workshop, November 17th 2005

A successful workshop looking in depth at ‘Big Data’ issues took place in November. The meeting was hosted within the King’s Manor in York. The workshop was chaired by Julian Richards, Director of the ADS. A series of presentations were made to participants interspersed with lively debate. Keith May spoke first on why English Heritage (EH) had commissioned the ‘Big Data’ project.

The speakers that followed have experience of ‘Big Data’ with organizations outside of archaeology. Mark Dunkley talked on EH involvement with other government organisations such as the UK Hydrology Office (UKHO) in terms of data sharing and archiving of ‘Big Data’. David Barber then described the EH funded ”Heritage 3D” project which has synergies with the ‘Big Data’ project but concentrated mainly on the survey specification for carrying out laser scanning rather than how to archive the data. Jerry Giles gave a very interesting overview of one of the National Environment Research Council (NERC) data centres where he is manager.

After lunch speakers described the three case studies being used by the ‘Big Data’ project to help formulate management and preservation strategies. John Gribble of Wessex Archaeology described the ”Wrecks on the Seabed” project and hence the problems associated with the large datasets generated by underwater techniques such as detailed magnetometry, sub-bottom and multibeam bathymetry survey. Michael Rainsbury from Durham University talked about the ”Breaking through rock art recording: three-dimensional laser scanning of megalithic rock art” project which scanned examples of rock art in Northumberland. Vince Gaffney of the University of Birmingham introduced us to the ”Where Rivers Meet” which used lidar survey data as a base for the project. The survey data was supplied by an external organization which raised interesting questions about copyright. Vince also briefly described the ‘North Sea Seismic’ project which is using very large base datasets (terabytes) again supplied externally.

The final session was presented by Big Data project staff, Tony Austin and Jen Mitcham who described progress so far including an initial analysis of an ongoing online questionnaire (this is no longer available, but the results are) about big data users and usage and an overview of data audits undertaken on the case studies. A possible preservation and dissemination strategy for the rock art case study was also demonstrated.

- Agenda

- Participants

- Powerpoints

- Notes

Deliverables

The key outcomes of the project will be raised awareness and recommendations on preservation and management strategies for exceptionally large data formats. In particular:

- Preservation issues and practices for ‘Big Data’ archives.

- Dissemination issues and policy practices for ‘Big Data’ archives.

- Alternative approaches to data archiving strategies, especially concerning ‘Big Data’ archives including the potential for likely re-use of different types of data as an indicator of archive suitability.

- Users and uses of ‘Big Data’.

- Cost implications of different approaches to ‘Big Data’ preservation and dissemination.

In achieving the above outcomes nine specific deliverables will be generated:

- Interdisciplinary review of literature and good practice relevant to digital archivists, fieldworkers and researchers working with ‘Big Data’ in archaeology and cultural heritage management (outcome of the literature review is represented by footnotes in the documents below)

- Representative directory and interest list of users and uses for ‘Big Data’ in the UK (73% of respondents to the ‘big data’ online questionnaire agreed to join such a list).

- A user survey (results from the online questionnaire).

- Documented review and debate regarding preservation and dissemination / reuse options for ‘Big Data’ (a successful workshop was held in York in November 2005).

- Case studies (pilot studies) of current projects demonstrating the cost implications of the preservation and reuse options identified above (once procedures were estabished for a particular format it was found that Big Data fitted into the current ADS lifecycle costing model).

- Three completed ‘Big Data’ archives, resulting in appropriately preserved data sets and access to data (dissemination) according to best practice as defined by the project. Covering data generated by maritime archaeology, laser scanning and LiDAR technologies. These archives will be stored for a five year period at suitable repositories on a distributed model determined by cost and suitability for access.

- Characterisation and preservation / reuse / dissemination recommendations on other data formats highlighted during the activity of the project (the formats review).

- Dissemination of the main contents of the ‘Big Data’ report through conference papers or session (following an interim presentation at the 2006 IFA conference the final outcomes were disseminated at CAA 2007).

- Completion of the ‘Big Data’ Report: bigdata_final_report_1.3.pdf (845KB)

Staff

Archaeology Data Service:

Project design: Dr Jonathan Kenny

Project manager: Tony Austin

Project staff: Jen Mitcham, Dr Keith Westcott

English Heritage: Historic Environment Commissions:

Casework manager: Tim Cromack

Commissions advisor: Keith May