This is the first of a two-part blog – the second will be a more detailed overview of the technologies involved in the digital dissemination – on the ADS’s work on what is colloquially known as the Roman Grey Literature project, but more officially as The Roman Rural Settlement of Britain. The project is funded by English Heritage and the Leverhulme Trust and is collaboration between ourselves, University of Reading and Cotswold Archaeology, which aims to produce a new synthesis of the rural landscape through the analysis of developer funded fieldwork. Some may be familiar with an earlier associated project, which has been archived by the ADS doi:10.5284/1000418, if you haven’t already seen it it’s well worth a look.

As with nearly all major research projects, the main outputs for this consist of the usual hard-copy publications including a monograph and various journal articles but in addition to these there will also be a project archive held with the ADS. As we’ve been involved in the project from the beginning the archive will be a great deal more than the usual ‘downloads’ interface. Hopefully by the end of the project (at the time of writing summer 2015) we’ll have in place a well-formed and meticulous archive to allow sophisticated reuse of the data and grey literature sources collected by the team, thus facilitating and encouraging further analyses by the archaeological community.

What data is being produced?

The data collection is split into two parts; the first is the identification and collection of grey literature relating to Romano-British archaeology and is being undertaken by Cotswold Archaeology. I’ve been helping out here by providing the team with a list of reports already held by the ADS (we have a lot, over 20,000 and counting!), but also with making sure that the ADS hold the relevant permissions from the producers of the reports to archive. Cotswold has been making sure that digital reports are of a suitable standard (for example with OCR) and are catalogued with basic bibliographic and spatial metadata.

The second part of data collection is the analysis of the grey literature (and published sources) by the team at Reading – to do this I’ve built them a pretty large, but simple database to record fine details such as site type, presence of Early Medieval activity, numbers and types of coins, faunal remains, human remains and so on.

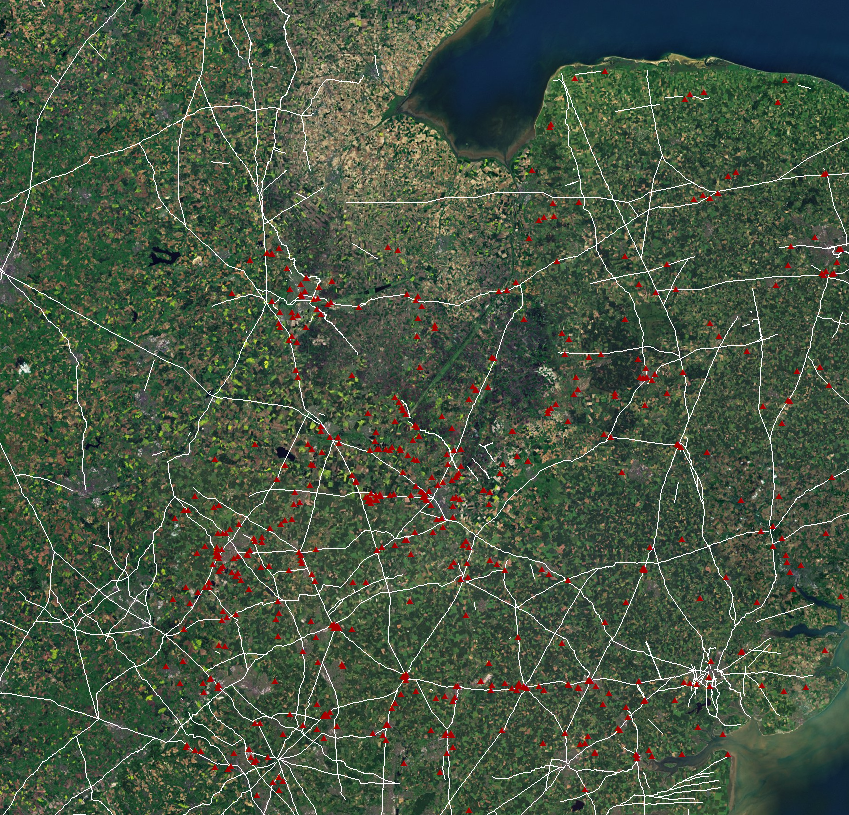

I’ve also set up a fairly detailed desk-based GIS for the researchers at Reading, this incorporates Web Mapping Services (WMS) such as geological

maps from the BGS and a wide range of Heritage data including exports from English Heritage’s AMIE database, and National Mapping Programme (NMP) data. The latter is a fantastic resource for any ‘landscape’ study, but there are well known issues with legacy data. Fortunately Chris Green on the EngLaID project has very kindly shared the processes he built to deal with this. At this stage, the Access database can simply be added as a connection and queried in relation to this baseline data – for (a rather random) example “show me every record with 4th century inhumations and cattle bones within 100m of a villa”. Early signs are that the Reading team are producing detailed and informative results. At the moment the results sets are being saved as comma separated values and ESRI shapefiles.

What are the ADS going to do with it?

In the first instance we’re actively working towards the wider reuse of the grey literature which has been collected, scanned and documented by Cotswold Archaeology. At the moment we’ve already been given all the reports from the East of England which I’m working through as I write; the Southeast and East Midlands are expected soon. Using the metadata created by the project team, the reports will be added to the Grey Literature Library and assigned a digital object identifier (DOI) where they can be cross-searched along with the rest of the 20,000+ corpus. An additional facet of this process is the addition of grey literature records to the Archsearch index, allowing grey literature to be discoverable alongside inventory records and archives. Another aspect we’re happy about is the potential of making our grey literature records available to other organisations, particularly the HERs. This is achievable as the Cotswold and Reading team have been carefully recording HER Monument and Event ids during data collation. Thus we’re more than happy to produce an export of our grey literature database (with the DOI) for those records that have had a HER id listed. This can then be incorporated into the HER and thus the Heritage Gateway. A neat example of this can be seen for the Romano-British site at Waterbeach, Cambridgeshire on the Heritage Gateway; scroll down to the bottom and there are links to those reports on the ADS. I feel that this is a major positive feature to come out of the project – we’re not only collecting and reusing information from the HERs, but also giving them something back in return.

The web-pages for the project archive (to be released upon completion of the entire project) will aim to replicate the searches that one can perform on the various desktop software. In order to do this the database will be rebuilt in all its Oracle glory and available to query online – no software needed except your web browser. Another advantage of this will be the capability to enter incredibly detailed searches, so for example in the grey literature library interface you’re currently limited to thesaurus terms such as ‘COIN’; however in the project database you’ll be able to specifically pick out coins minted between for example AD348-364. Of course, once you’ve found the site records you’re interested in, you’ll be able to link straight through to the digital grey literature to investigate further.

In addition to all this, the desktop GIS will be replicated as a Web GIS – although technically speaking this will be Web mapping (see my next post). As with the desktop version we’ll be able to utilise WMS from external organisations to provide context. For the most part, the user will be able to explore the data via the predefined queries produced by the project team, but in addition these queries will be able to be broken down further on (pre-defined) facets. As mentioned above, more information (for the technical minded) will follow in the next blog, if you’re curious as to what this may look like an examples of this type of interface can be seen at doi:10.5284/1000151. All this, and with no need to download any software! Of course for the keen researcher, all original files will be available to download and reuse under the standard ADS Terms and Conditions.

Of course there’s the potential for a great deal more that we can do with this incredibly rich resource, but that can wait until another day…

I found this through the ADS Facebook page – really interested to see the final results and dataset. Indeed.